More than 100 researchers from leading institutions have warned that unrestricted access to certain biological datasets could allow artificial intelligence (AI) systems to design or enhance dangerous pathogens, posing a significant biosecurity risk. In an open letter, scientists from Johns Hopkins University, the University of Oxford, Fordham University, and Stanford University urged stronger safeguards to prevent misuse while maintaining access for legitimate research.

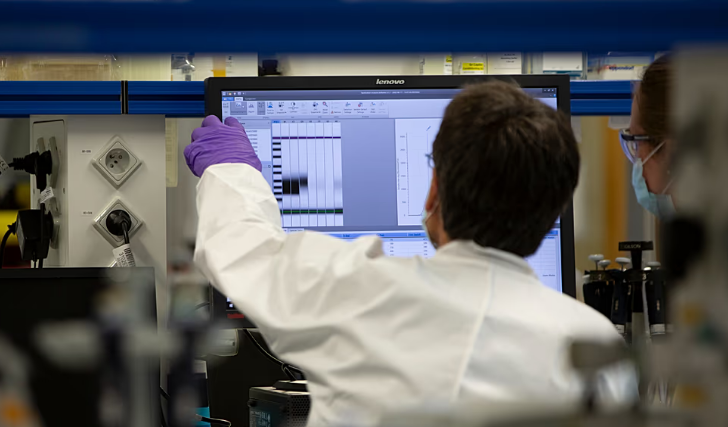

AI models in biology rely on large volumes of genetic data, including pathogen sequences and other biological information, to predict mutations, identify patterns, and generate new variants. While these tools have accelerated scientific discovery, the researchers caution that a small subset of data could enable AI to create transmissible viruses or other biological threats affecting humans, animals, or plants.

“The stakes of biological data governance are high, as AI models could help create severe biological threats,” the letter states. The authors describe this as a “capability of concern,” highlighting the potential for AI to simplify the creation of dangerous pathogens that could lead to pandemics.

The researchers propose a framework to regulate access to high-risk data. While most biological datasets should remain openly available, “concerning pathogen data” requires stricter security checks. Moritz Hanke, co-author from Johns Hopkins University, said, “Limiting access to sensitive pathogen data to legitimate researchers might be one of the most promising avenues for risk reduction.”

Currently, no universal guidelines exist for controlling these datasets. Some developers voluntarily exclude high-risk data, such as viral sequences, from AI training. Evo 2, an open-source AI model for biology developed by the Arc Institute, Stanford, and TogetherAI, for example, has withheld sequences from pathogens infecting humans and complex organisms to prevent misuse for bioweapons. ESM3, from EvolutionaryScale, has adopted similar precautions.

The proposed framework introduces a five-tier system called Biosecurity Data Level (BDL) to categorise data based on its potential risk. BDL-0 covers everyday biology data with no restrictions, while BDL-1 includes basic viral building blocks. BDL-2 covers traits of animal viruses, BDL-3 human virus characteristics, and BDL-4 represents engineered viruses, such as enhanced COVID-19 mutations, which would face the strictest controls.

To ensure secure access, the researchers recommend technical tools such as watermarking, data provenance, audit logs with tamper-proof signatures, and behavioural biometrics to verify legitimate users and track misuse. Jassi Panu, co-author of the letter, emphasised that expert guidance is needed to determine which data pose meaningful risks, as some developers currently make best-guess decisions when excluding sensitive information.

The scientists argue that balancing openness with strong security measures will be essential as AI tools grow more powerful and widely available, ensuring that biological research can advance without enabling potentially catastrophic misuse.